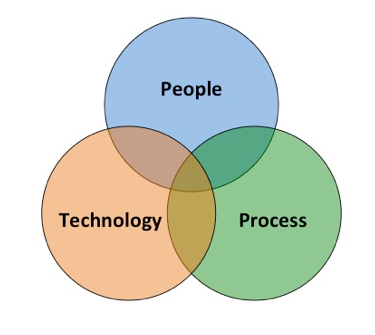

Management accountants have an opportunity to play a central role in the data governance process, including ensuring that data quality is maintained at its foundation. One way to help illustrate this opportunity is through the People-Process-Technology (PPT) model (see Figure 1):

- People: the professionals involved;

- Process: the processes and procedures followed or used by the professionals within those processes, including guidance, standards, frameworks, and methodologies; and

- Technology: the technologies used to enable, protect, control, and support the people and the processes.

Management accountants sit at the nexus of the PPT model since they manage internal controls, monitor performance, assess risk, budget, forecast, and provide various types of measurement and reporting. The same is true for their role in maintaining effective data governance and the protection of data quality.

Management accountants are centrally involved in reporting (which increasingly includes nonfinancial reporting) and are employed, in part, to ensure the integrity of the information disclosed in reports, whether internally for management or externally to stakeholders (e.g., investors, analysts, regulators). Management accountants are a key segment of the People component of the PPT model, while the reporting processes, accounting standards, internal control frameworks, risk management methodologies, data governance policies, and quality control are part of the Process component.

Regulatory authorities around the world today increasingly require companies to produce digital external compliance reports using a global technology standard called XBRL (eXtensible Business Reporting Language). XBRL is used to encode (or tag) financial and business facts so that the information in the reports can be read automatically by XBRL-enabled software tools and more easily sorted, compared, discovered, and repurposed by software. XBRL is one of the components of Technology in the PPT model, along with other software, hardware, and technology solutions.

In 2009, the U.S. Securities & Exchange Commission (SEC) mandated that companies use XBRL within their digitally filed financial statements and reports to the Commission. As the main oversight authority for the world’s largest capital market, the SEC required XBRL technology in part as a way to enable it (as well as stakeholders such as investors, analysts, and the public) to aggregate, consume, compare, and analyze large volumes of information in a format that uses a set of commonly agreed-upon data definitions (i.e., a standard set of tags). This standard set of data definitions/tags (i.e., taxonomy) is intended to enhance comparability of information across reports during analysis—and also improve the quality of information used in that analysis. For example, if data disclosed within an SEC compliance report (such as a 10K filing) is tagged accurately (according to the XBRL standard and the SEC’s XBRL filing rules), then users of this data should, in theory, have access to quality, comparable, reliable information. In other words, if People (e.g., those who gather, validate and tag the data: report preparers, accountants, attorneys, compliance professionals) follow proper Process (e.g., SEC XBRL filing rules, XBRL technical guidance, relevant accounting standards) using Technology (e.g., XBRL-enabled software tools, internal ERP/reporting systems, SEC’s EDGAR filing system) the way it was designed and intended, data quality should theoretically be at an acceptable level.

In the United States, however, data quality concerns plague the market related to information disclosed to the SEC in XBRL format. In late 2012, An Evaluation of the Current State and Future of XBRL and Interactive Data for Investors and Analysts, a report issued by Columbia Business School’s Center for Excellence in Accounting and Security Analysis (CEASA), highlighted problems with data quality in filings to the SEC. A key focus of the report was the use of custom XBRL tags (also called extensions) that some companies created to identify additional information that they wanted the market to receive as part of the filing but that wasn’t included in the core set of data definitions in the U.S. Generally Accepted Accounting Principles (GAAP) taxonomy. A challenge arises when two or more companies define their own custom XBRL tags for the same business fact, rendering the comparability of that information by software tools and analytical processes very difficult and time-consuming. (In a market in which decisions are made within fractions of seconds, there often isn’t much room for time-consuming analysis if one wants to take advantage of a market opportunity.)

Additional concerns were raised over how information was being tagged incorrectly due to a lack of understanding of the rules and accounting standards—this indicates a potential breakdown in one of more of the PPT model components. An example of this might include a company tagging a dividend payout as a credit rather than a debit. This might signify that the People may not understand the accounting or filing rules and that the software (Technology) might not be able to catch the error during the validation process.

In September 2013, Representative Darrell Issa (R.-Calif.), then chairman of the House Oversight Committee, sent a comment letter to SEC Chair Mary Jo White in which he referenced 1.4 million errors in XBRL filings to the SEC that “lead to skepticism about the usability of the data.” With concerns over data quality, reliability, and utility, it was suggested, users lost faith in the entire data set, calling into question the usability of the data for analysis.

There are some bright spots. In September 2014, Mark J. Flannery, SEC chief economist and director, addressed the Data Transparency Coalition’s Fall Policy Conference attendees in Washington, D.C., on the topic of XBRL data quality and some of the improvements being seen. Flannery stated, “It is very encouraging that large and midsize companies are demonstrating continuous improvement. Smaller companies have had less time to comply. We should not be surprised at this point that their improvement is slower, given their more limited resources. Our staff analysis shows that there continues to be significant innovation in the XBRL-related services industry—there are currently more than 30 third-party XBRL providers compared to 11 in 2009. Moreover, the creation of tagged data output has resulted in greater automation within the internal reporting process at companies who now can use new vendor products that integrate XBRL tagging into their financial reporting tools. As their product and service offerings continue to improve and their market shares continue to shift to reflect their relative improvements, we should expect to see tangible benefits among all sized filers.”

XBRL International, Inc. (XII), the creator and manager of the global XBRL standard, also has a series of initiatives designed to enhance data quality. Its XBRL Best Practices Board (BPB), for which I am the current vice chair, is responsible for developing guidance and resources to help the market better understand, adopt, and use XBRL for internal and external disclosures. Current efforts include the development of the XBRL implementation life cycle to help organizations design and develop their own reporting programs. Task forces have been formed to study best practices surrounding the use of extensions as well as taxonomy architecture—these are designed to enhance the People and Process parts of the PPT model. XII’s XBRL Standards Board also has established an Open Information Model working group to develop a framework for XBRL to work with other current technologies such as JSON (JavaScript Object Nation)—this will enhance the Technology component of the model.

As with any new disclosure requirement, there’s bound to be a transition period in which errors and quality-control issues will arise. Management accountants play important roles in helping to reduce those errors and enhance quality of information through the use of better training and education, stronger technology tools, and increased awareness of potential problem points.

In fact, XBRL US recently formed the Center for Data Quality in June 2015 to “address public concerns about XBRL and improve the quality and usability of XBRL-tagged financial data filed with the SEC.” The Center’s goals are to:

- Develop standardized guidance on the consistent tagging of data;

- Put guidance from the Financial Accounting Standards Board (FASB) and the SEC into computer code, thereby automating its availability; and

- Give public companies new tools to facilitate high-quality XBRL filings.

Currently, the Center is “developing guidance and validation rules for XBRL tagging with the goal of helping companies file consistently accurate XBRL disclosures” with the SEC. The committee will focus at first on rules that test for input errors and verify compliance with current SEC and FASB guidance. Its next step will be to look at guidance for uniform, consistent tagging (e.g., whether and where to create extensions). The Center’s efforts to develop additional guidance (i.e., information to better inform the Process) and to bring together several members of the Technology component of the PPT model (to agree on a uniform set of rules to embed in their software tools) is intended to help eliminate some sources of data quality errors.

Technology and Process are two important components of the model. But enhancements to the People component also are necessary. This includes better training and education of the company professionals involved in creating and communicating reliable, usable information to stakeholders.

This is where management accountants can truly be part of the solution to data quality concerns. Not only are they members of the People component; they have the opportunity to sit at the nexus and help organizations overcome challenges in People, Process, and Technology in order to maintain effective data governance and quality, as Figure 2 shows.

Information is the lifeblood of any business today and a valuable asset. The same can be said about the stewards of that information—you, the management accountant—who can guide the talented people, often complex processes, and ever-changing technologies to higher standards of data quality through an integrated, effective data governance approach.

April 2016