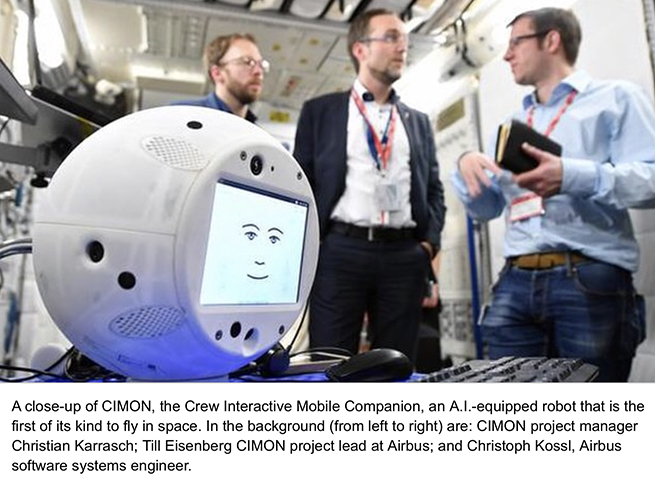

CIMON is an AI-enabled robot that’s a little larger than a basketball. Its brain is from IBM’s Watson program with a body by Airbus that was created using 3-D printing. According to Manfred Jaumann, head of Microgravity payloads from Airbus, “CIMON will be the first AI-based mission and flight assistance system.”

He explained, “We are the first company in Europe to carry a free flyer, a kind of flying brain, to the ISS and to develop artificial intelligence for the crew on board the space station.” The 11-pound floating sphere will provide a hands-free assistant for the astronauts, displaying procedures for some of the experiments, serving as a flying camera, and acting as the space station’s voice-controlled database.

A HUMAN COMPANION

CIMON was specifically trained to recognize the face and voice of the German astronaut and geophysicist Alexander Gerst. The two have practiced routines that will be used during the European Space Agency’s Horizons mission, which will last from June 2018 to October 2018 when the robot and materials from other experiments will be sent back to Earth. The CIMON/human interchanges will be conducted in English, and the robot’s screen “face” is equipped with a library of expressions to help create an affective human connection with Gerst and the other astronauts.

When called by Gerst, CIMON spatially locates the source of the sound and will maneuver to his training partner. Floating at eye-level, the robot’s front camera will check to see who is speaking, and it will respond accordingly. The AI systems can detect emotional states in human speech, and those become part of the interpretation and are integrated into the ongoing conversation.

CIMON doesn’t have articulated body parts, but it can fly around in zero gravity using short, coordinated bursts of air using more than a dozen embedded propellers.

ROBOT GOALS

There are three categories of goals for the floating robot. In the short term, CIMON should be able to answer some basic questions about whether AI-enabled androids are suitable for other space missions.

What can be learned in the interim from the interactions between the humans and the robot could be important when planning the size and makeup of crews for longer missions on the space station or on longer space flights.

An IBM blog reporting on the project, outlined a third level of expectations: “The CIMON project will also be devoted to psychological group effects that can develop in small teams over a long period of time and occur during long-term space missions. CIMON’s creators are confident that social interactions between humans and machines, in this case between astronauts and a space attendant equipped with emotional intelligence, could make an important contribution to mission success. We predict that assistance systems of this kind also have a bright future right here on earth, such as in hospitals or to support nursing care.”

CIMON is the first AI-enabled, cloud-connected robot on an actual space mission. One of the first notable examinations of human/machine intelligence on a space flight was fictional and occurred in the epic science-fiction film 2001: A Space Odyssey. That was a half century ago (1968), but the deep impression left by HAL still haunts the public conscience on matters relating to humans, their machines that can learn, and spacecraft.

With CIMON, IBM and Airbus have created the first Watson-class robot assistant for space travelers, and the small, companionable, and very smart robot is about as unlike HAL as you could have hoped for.

https://www.youtube.com/watch?v=4QsfsEfAl2s